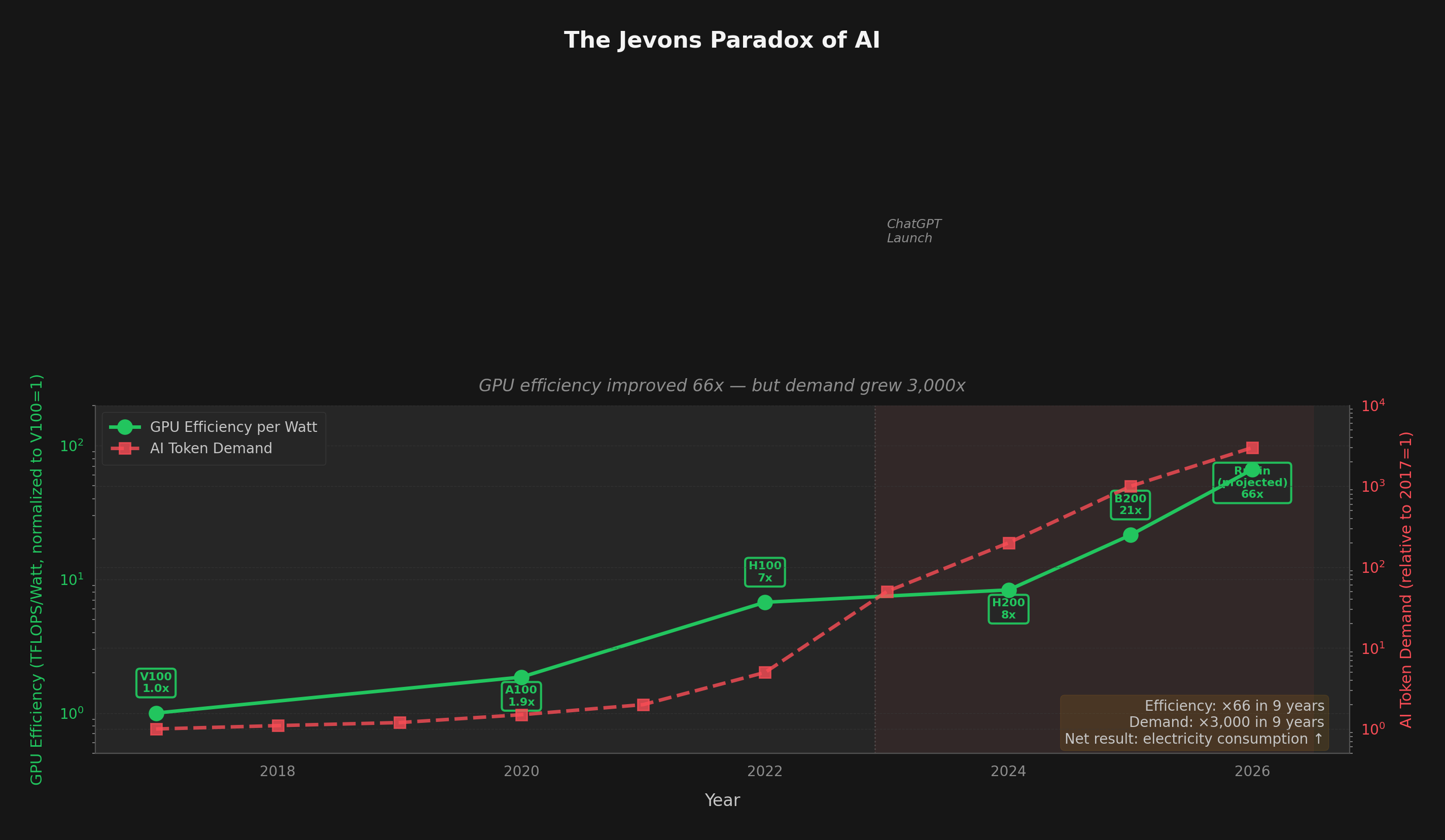

GPU efficiency has improved 66x in 9 years†. Token demand has grown 3,000x. The math doesn't work — and the grid is already feeling it.

February 4, 2026 · 12 min read · Data from EIA, EPRI/DOE, IEA, NVIDIA, Epoch AI

In 1865, economist William Stanley Jevons observed something counterintuitive: as coal engines became more efficient, total coal consumption increased rather than decreased. The improved efficiency made coal cheaper, which expanded its use far beyond what the efficiency gains could offset.

In 2026, we're watching the same paradox play out in AI — at a pace Jevons could never have imagined.

Satya Nadella invoked Jevons explicitly after the DeepSeek efficiency breakthrough in early 2025:

"As AI gets more efficient and accessible, we will see its use skyrocket, turning it into a commodity we just can't get enough of."

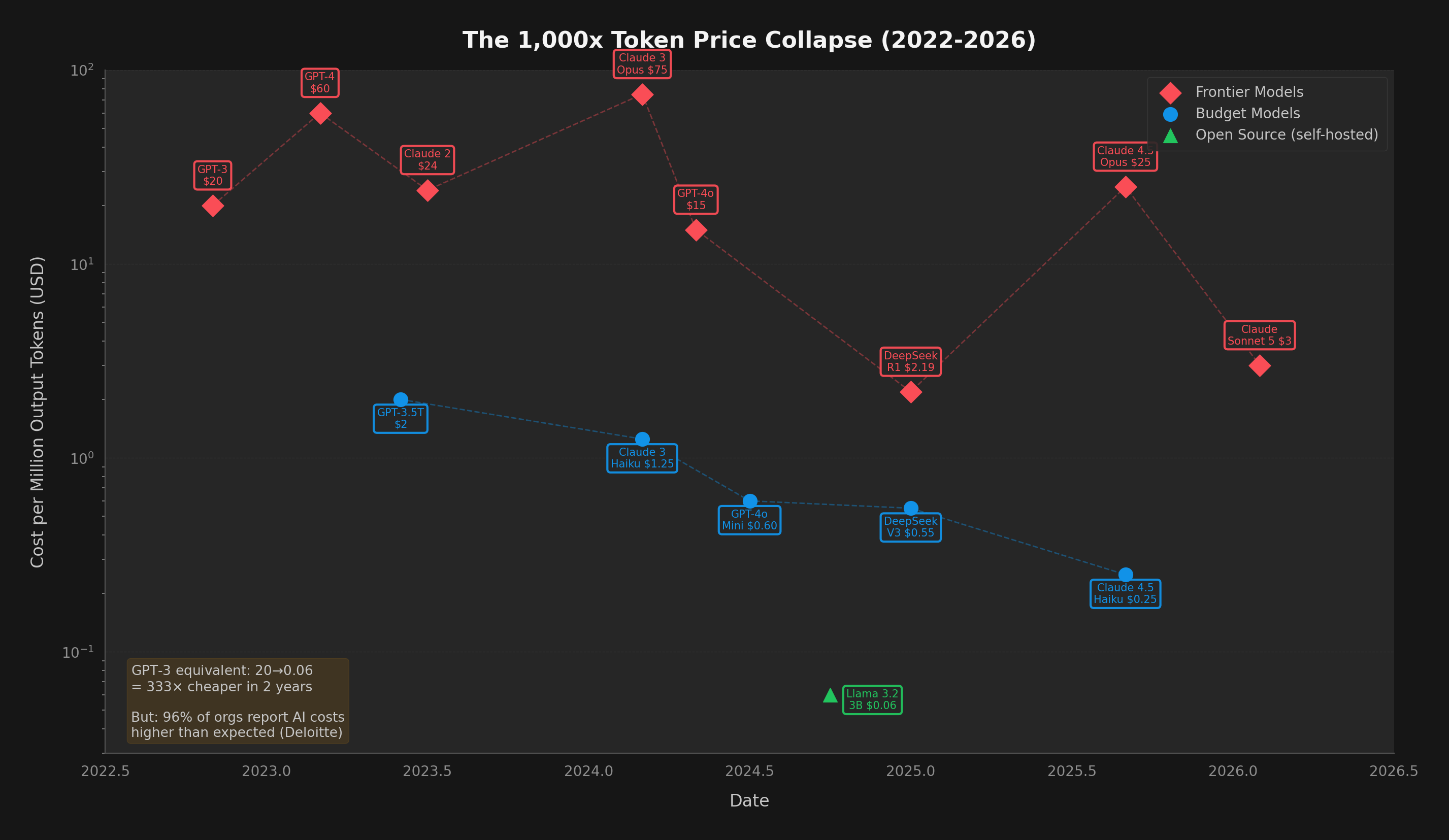

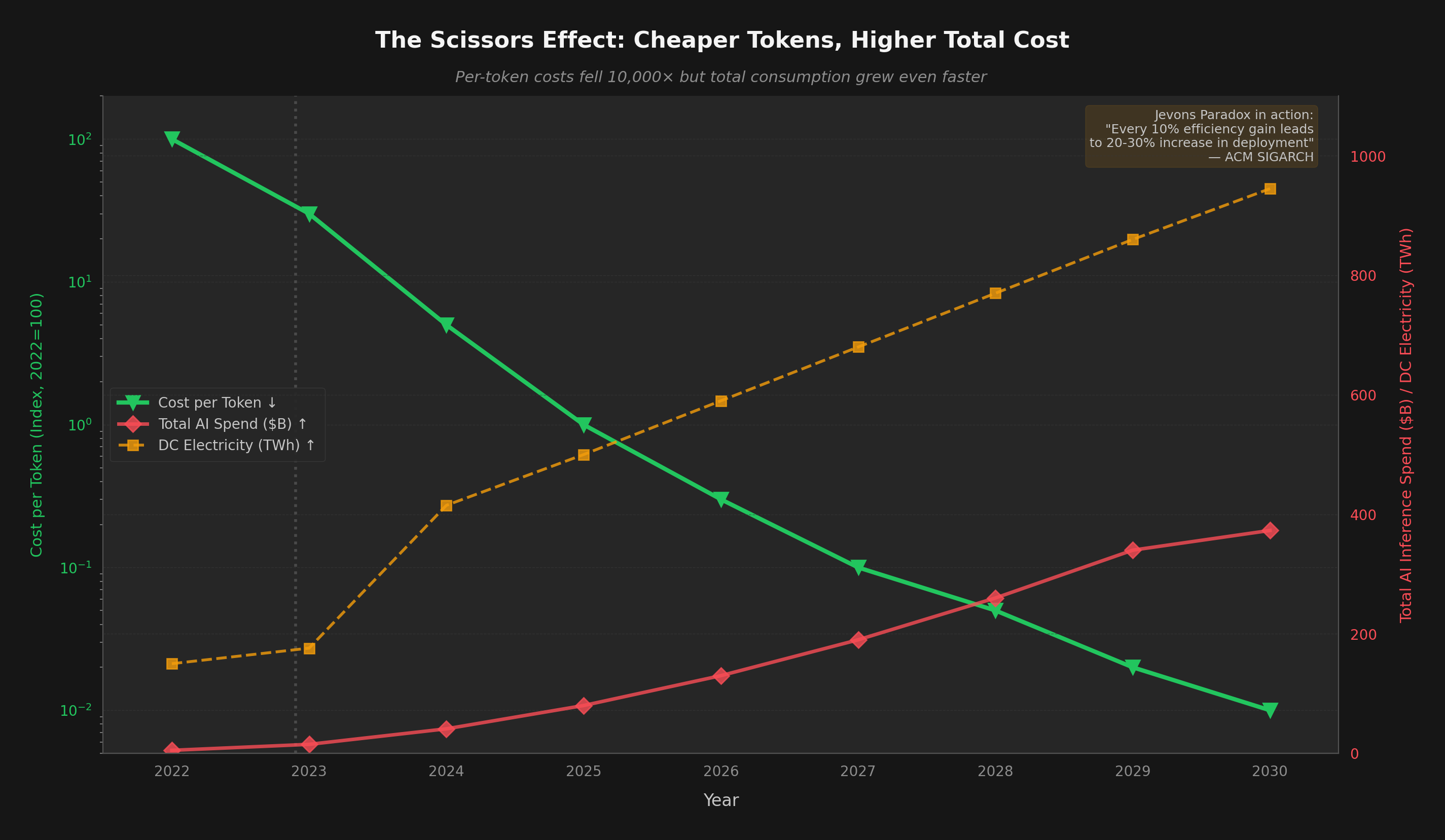

The data backs him up. According to ACM SIGARCH research, every 10% improvement in AI computing efficiency has historically led to a 20-30% increase in overall deployment and usage. Inference costs fell 1,000x in three years — but demand rose 10,000x.

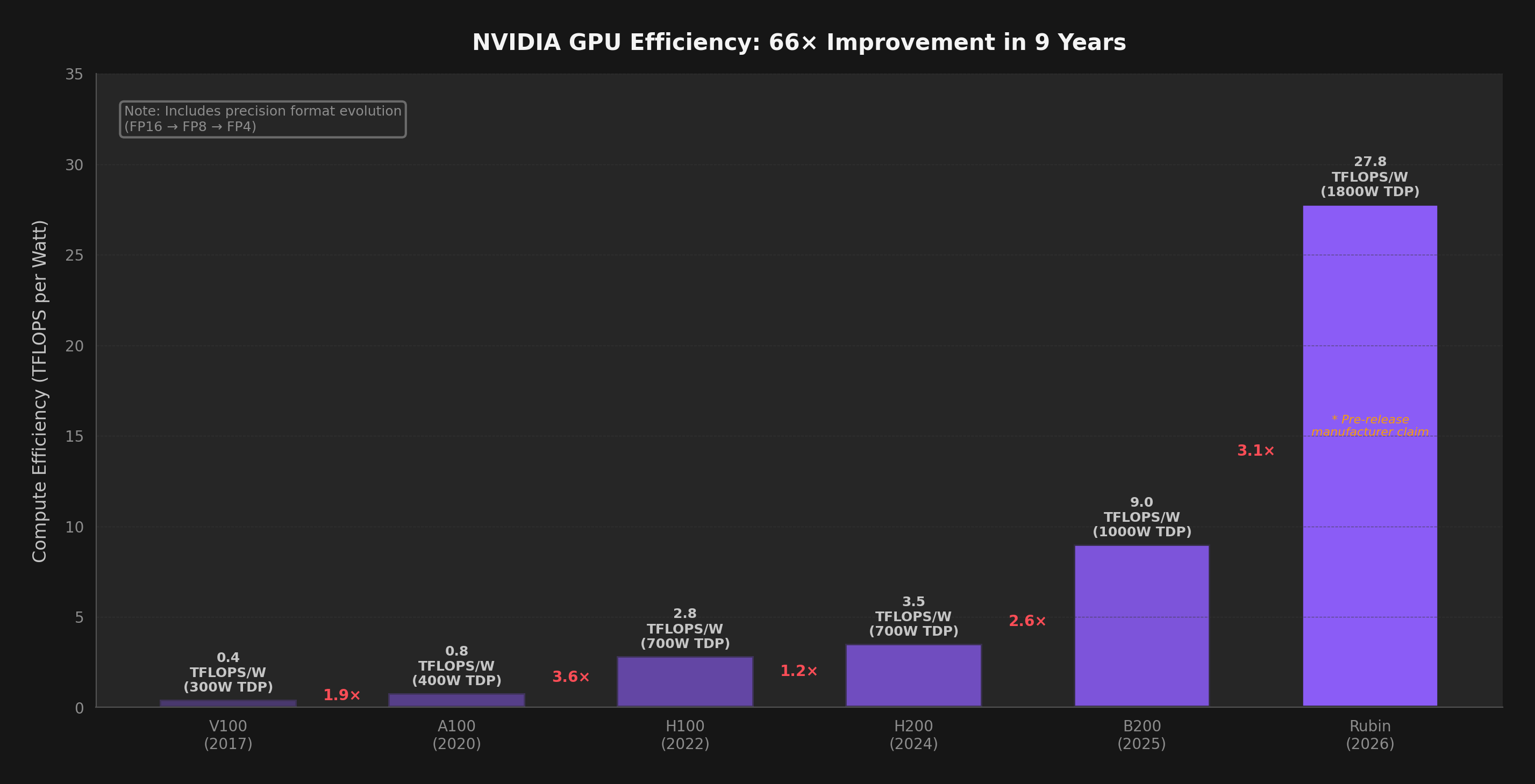

Let's start with what the optimists get right. The pace of GPU and TPU efficiency improvement is genuinely remarkable — perhaps the most aggressive hardware improvement curve since the early days of Moore's Law.

The token cost decline has been equally dramatic:

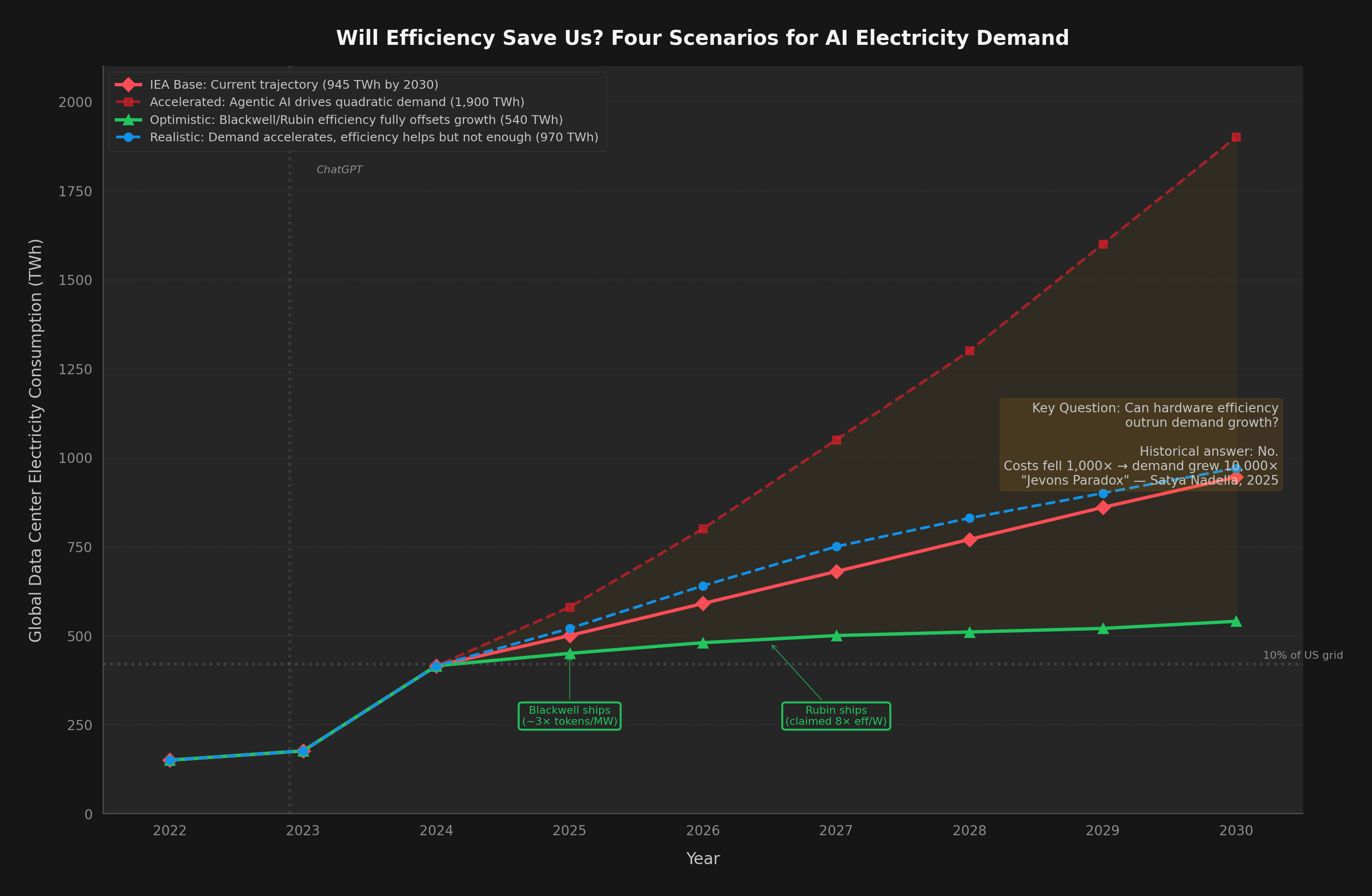

If you froze AI demand at 2024 levels and deployed Blackwell + Rubin hardware to replace existing data center fleets, total AI electricity consumption would fall by roughly 70-80%. The hardware is genuinely, dramatically more efficient.

But of course, demand isn't frozen. Not even close.

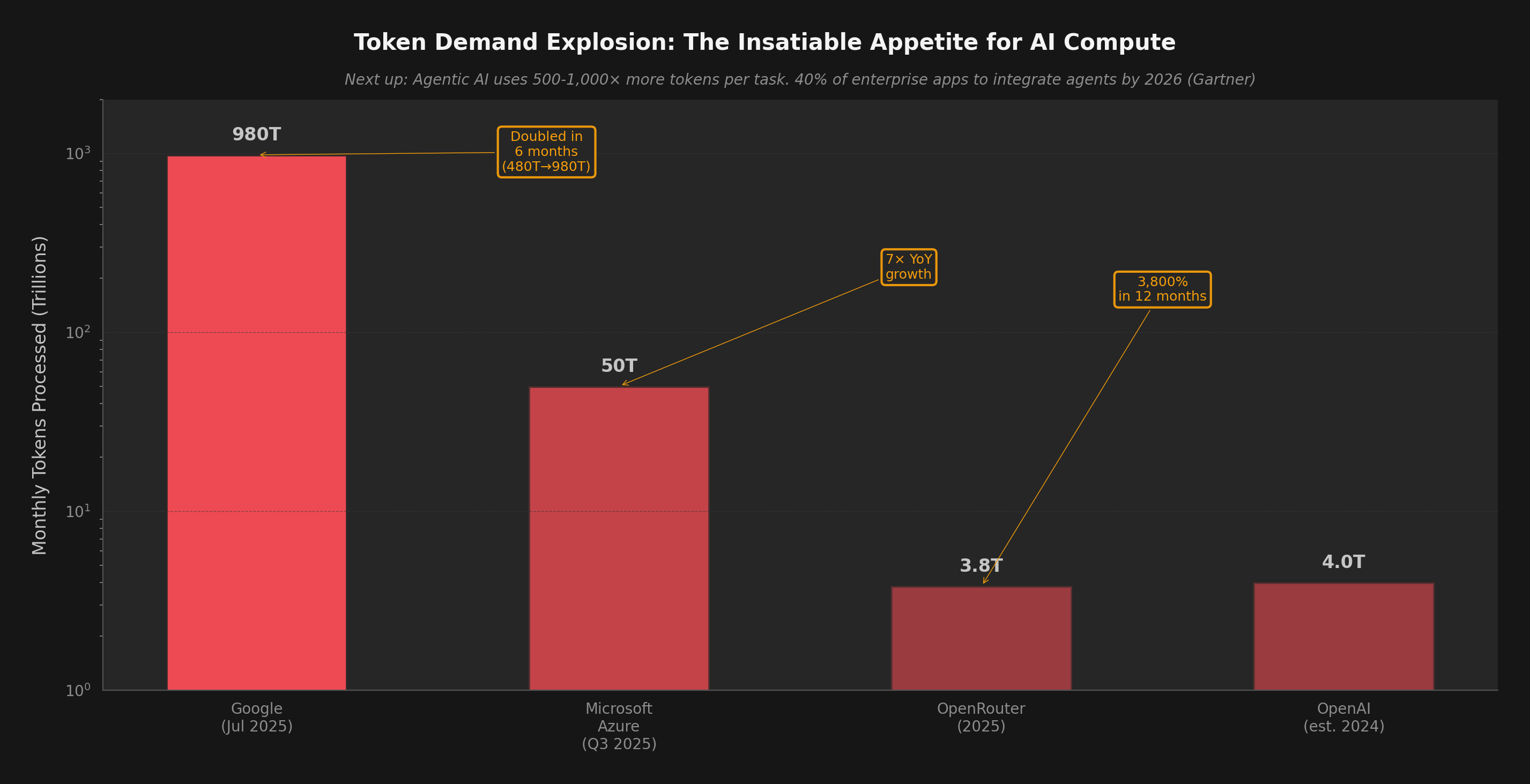

The scale of AI token generation is difficult to comprehend. Here's what the hyperscalers reported in their most recent earnings calls:

These numbers are staggering, but they represent mostly chat-based interactions. The next wave — agentic AI — is orders of magnitude more token-intensive:

A typical chat interaction uses 500-1,000 tokens. An agentic coding session (like Claude Code resolving a GitHub issue) uses 500,000 to 2,000,000 tokens — a 1,000x increase per task. Gartner predicts 40% of enterprise apps will integrate AI agents by end of 2026, up from <5% in 2025.

This creates the scissors effect — where per-unit costs fall but total expenditure rises:

"Inference costs fell 1,000x in 3 years, but demand rose 10,000x — net energy consumption increased dramatically."

Enterprise surveys consistently find that a majority of organizations report generative AI costs higher than expected at production scale — precisely because cheaper tokens drive exponentially more usage. As BCG's 2025 AI Radar survey noted, only 26% of companies have successfully moved past the pilot stage to capture meaningful value.

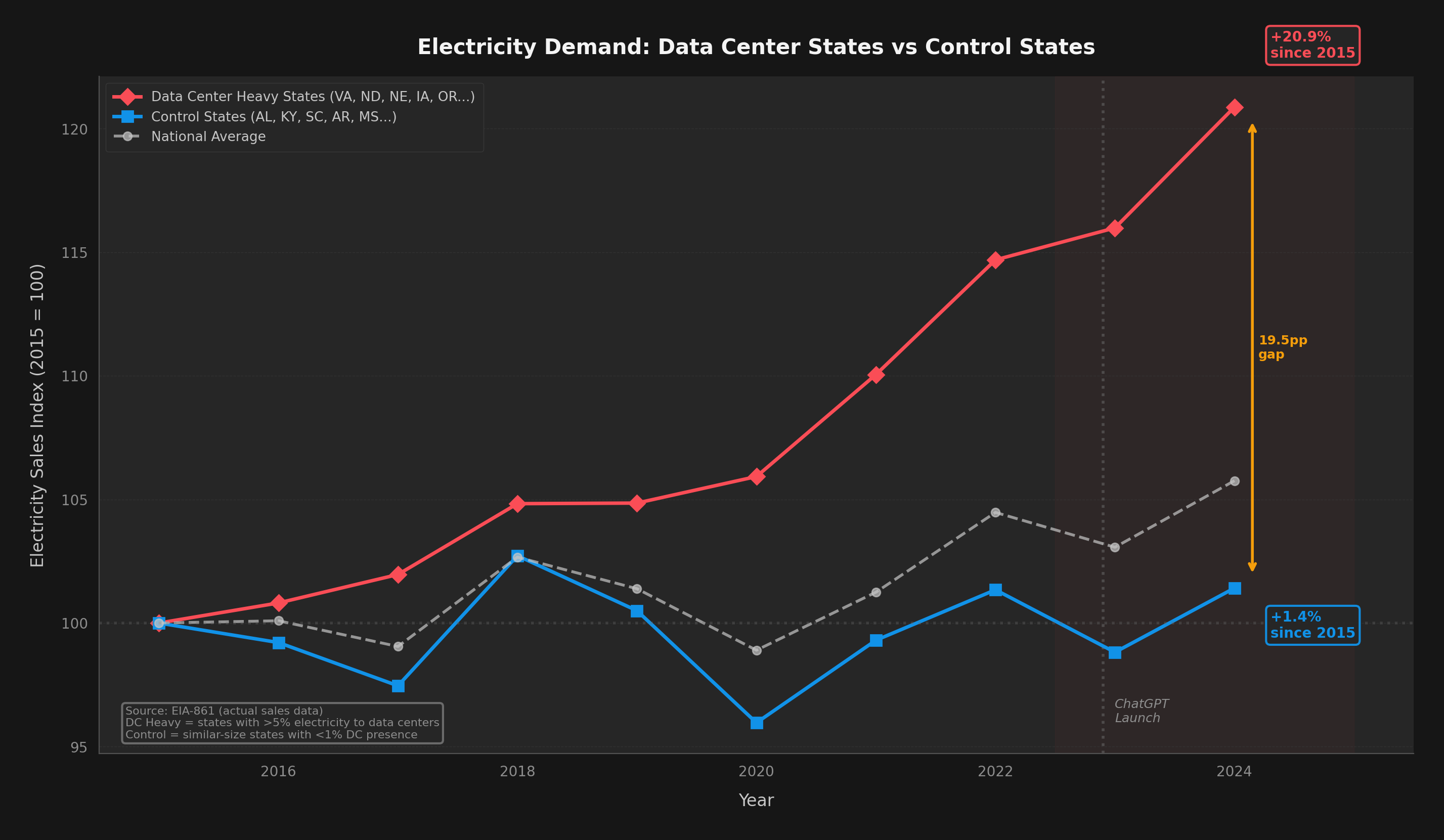

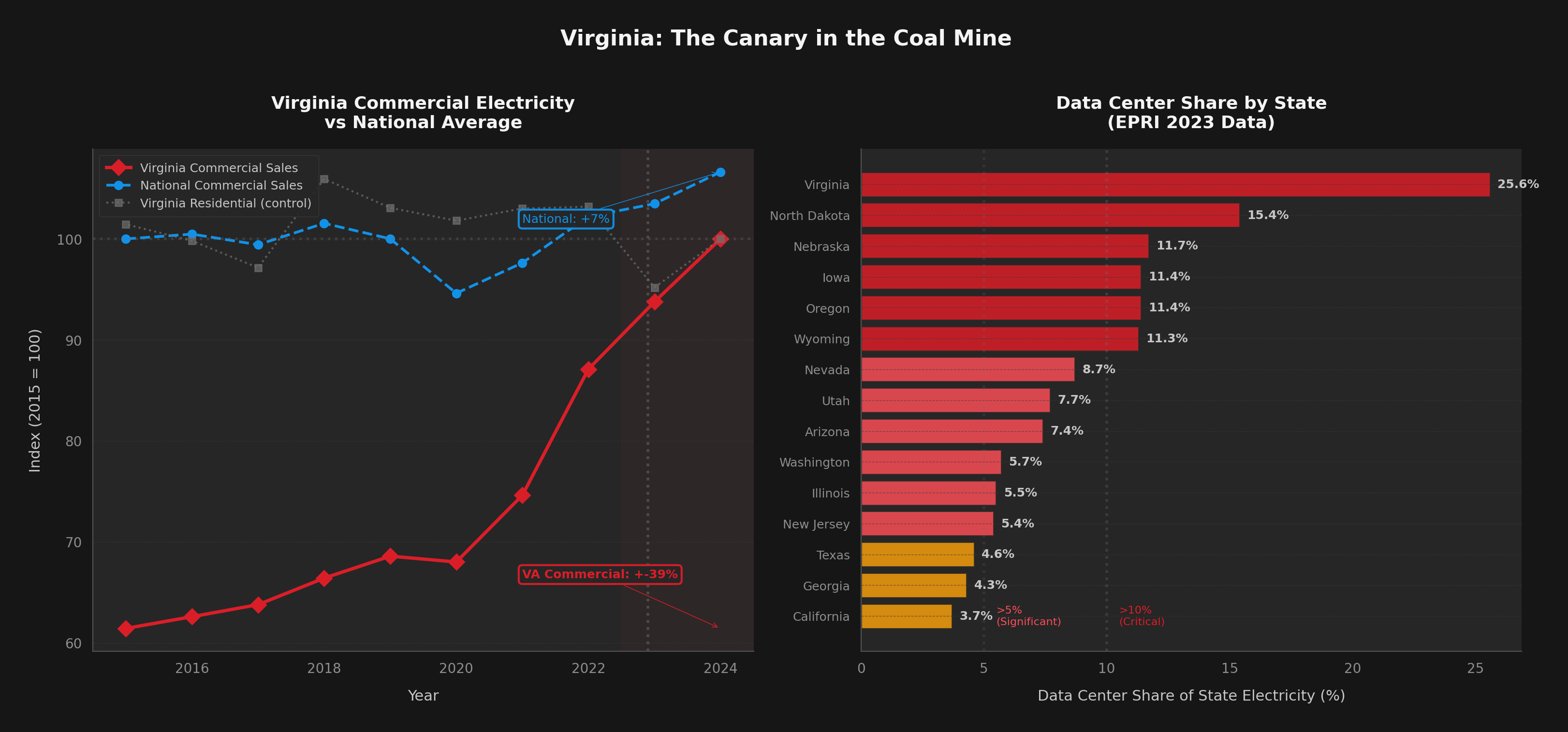

This isn't a theoretical future. The divergence between data-center-heavy states and the rest of the country is already visible in EIA electricity sales data.

We split US states into two groups using EPRI data: states where data centers consume >5% of total electricity (VA, ND, NE, IA, OR, WY, NV, AZ, UT) versus control states of similar size with <1% data center presence.

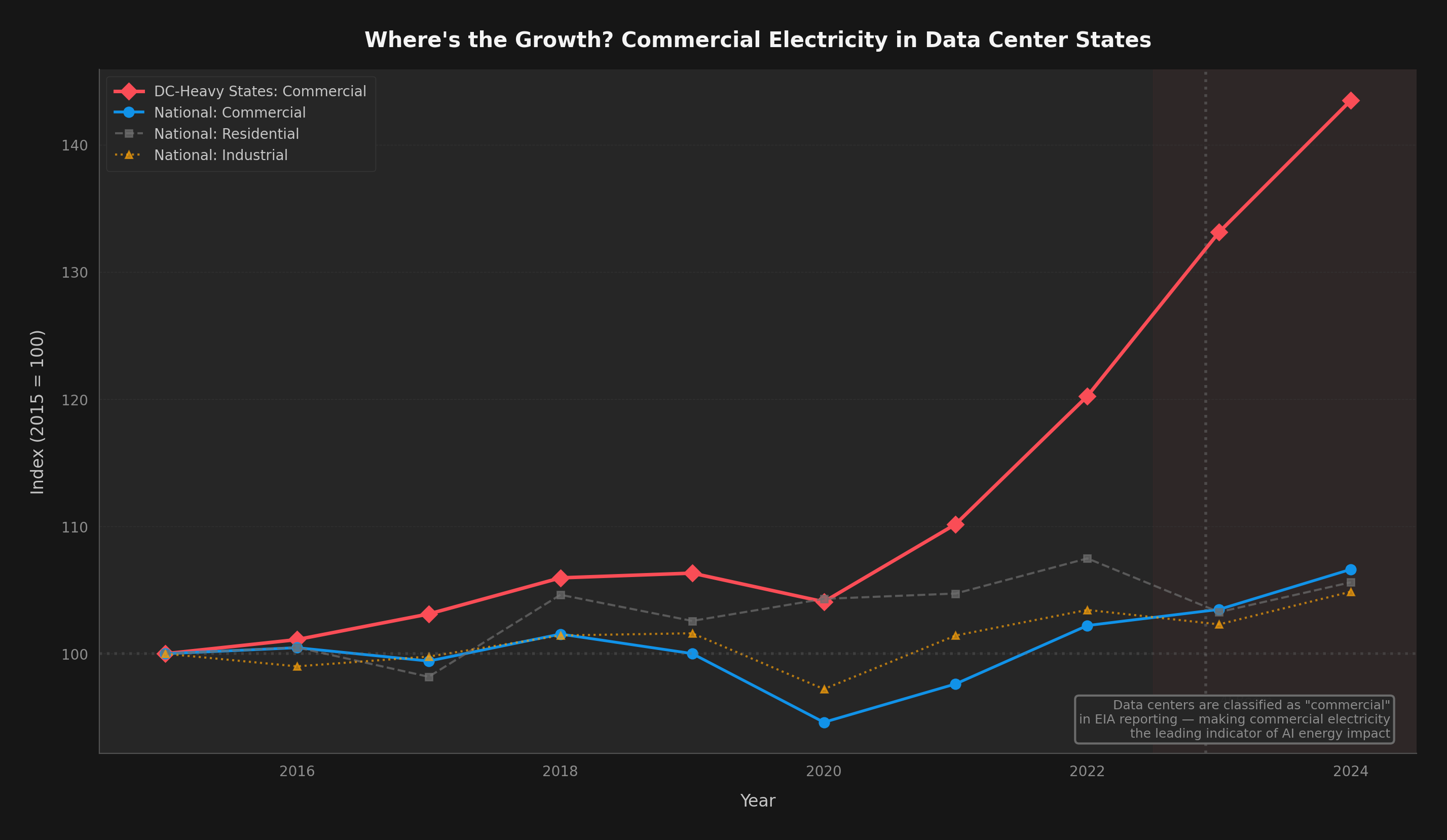

The divergence is even more dramatic when you look at commercial electricity specifically — which is the EIA category that includes data centers:

Virginia is ground zero for the AI electricity crisis. Northern Virginia (Loudoun, Prince William, and Fairfax counties) hosts the highest concentration of data centers in the world. According to EPRI, data centers already consume 25.6% of Virginia's total electricity — roughly one in four watts generated in the state goes to a data center.

A December 2024 report by Virginia's JLARC (Joint Legislative Audit and Review Commission) found that under unconstrained growth, data centers could drive a 183% increase in the state's total electricity usage by 2040. Dominion Energy's capacity auction prices for the Virginia zone have already spiked 833%.

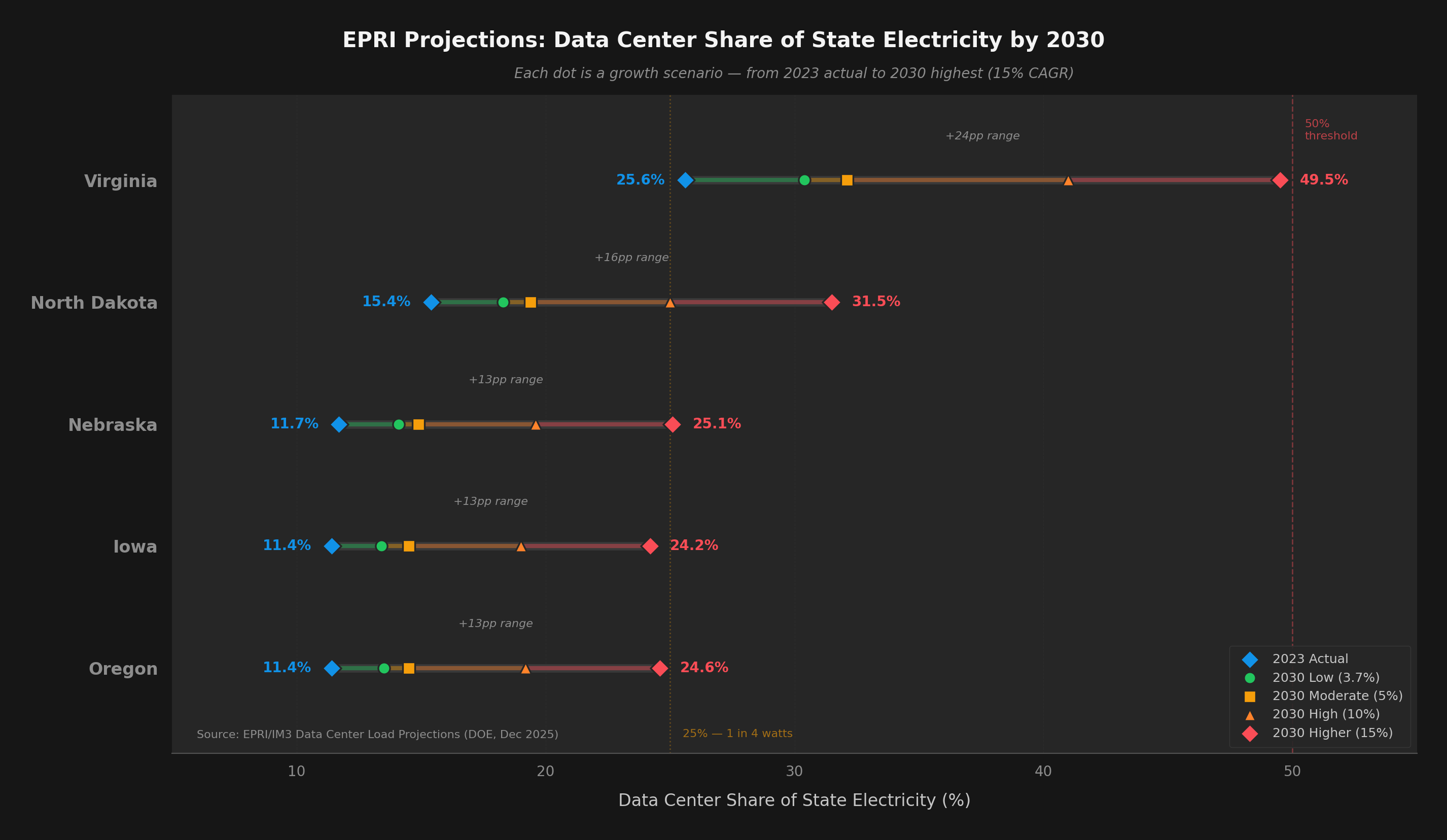

Virginia didn't plan for a quarter of its electricity to go to data centers. It happened gradually, then suddenly. The EPRI projections show other states on the same trajectory — North Dakota (15.4%), Nebraska (11.7%), Iowa (11.4%), Oregon (11.4%) are all past the 10% mark. Under high-growth scenarios, multiple states could see 25%+ data center shares by 2030.

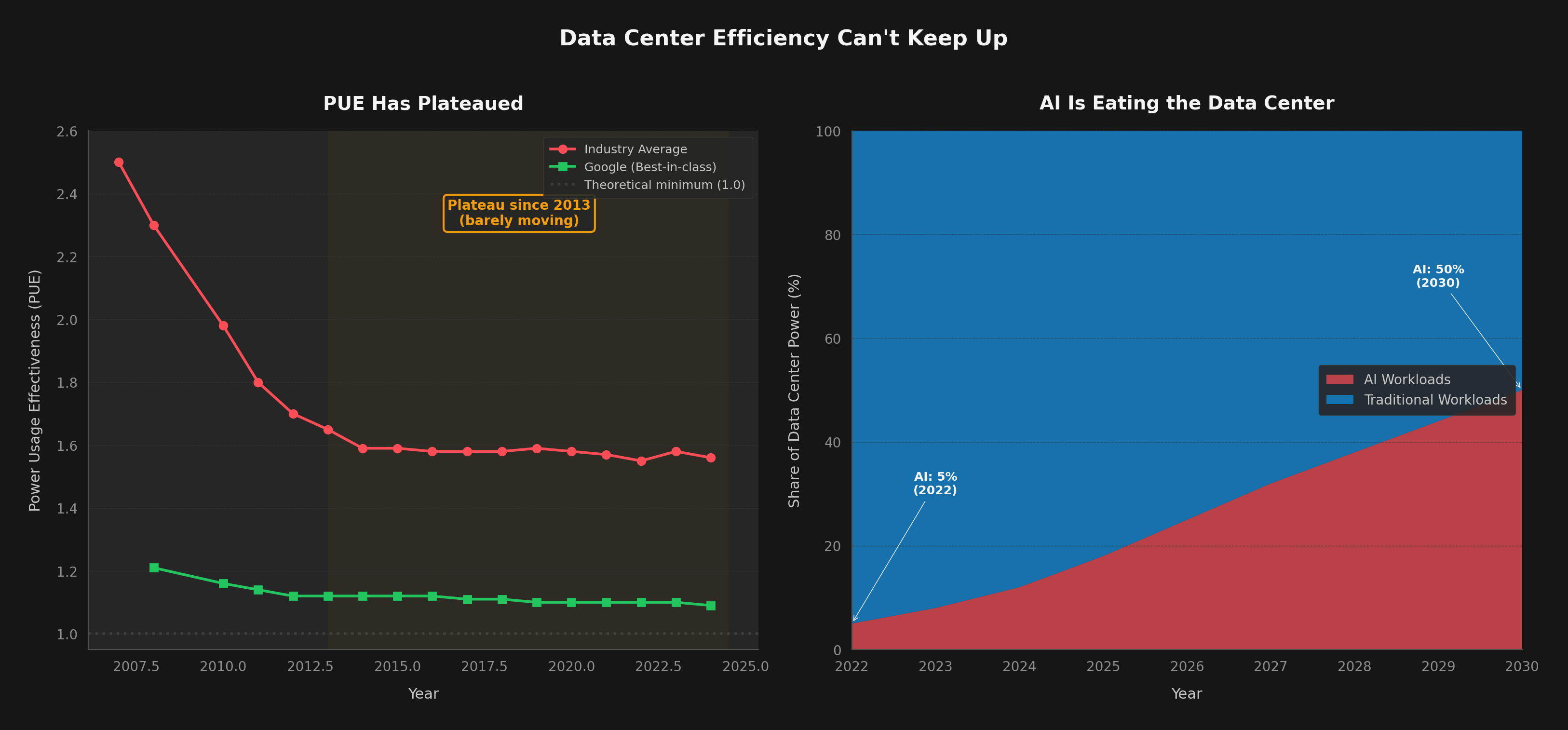

Data center operators have been improving Power Usage Effectiveness (PUE) — the ratio of total facility power to IT equipment power — for two decades. But this lever is nearly exhausted:

The uncomfortable truth: there are only three levers to reduce AI's electricity footprint:

Improving at ~3x per GPU generation (every 2-3 years). Genuine and impressive, but capped by physics. Each generation also increases TDP: V100 was 300W, Rubin is 1,800W.

Accounts for ~35% of capability improvement since 2014 (Epoch AI). Distillation, quantization, and MoE architectures help but are one-time gains per technique.

Plateaued at 1.55-1.58 industry-wide since 2013. Hyperscalers at 1.09-1.12. Approaching theoretical limits. Not a meaningful lever anymore.

Nobody is reducing demand. Enterprise AI adoption went from 55% to 78% in two years. 91% of developers use AI tools. Agentic workloads are 1,000x more token-intensive than chat.

We modeled four scenarios for global data center electricity consumption through 2030, balancing demand growth projections against hardware efficiency improvements:

The EPRI projections for individual states tell a similarly sobering story:

PJM, the grid operator covering Virginia and 12 other states (65 million people), initially projected a 6-gigawatt shortfall below reliability requirements by 2027. After stricter data center vetting, PJM revised this to approximately 2.6 GW in late 2025 — still a significant gap. Coal plants in Kansas City and West Virginia have already delayed closures to meet AI-driven demand. Data center water consumption is projected to double or quadruple by 2028, reaching 150-280 billion liters annually in the US alone.

The question isn't whether AI electricity demand will be a problem. It already is. The question is whether your organization is positioned for a world where compute is abundant but energy-constrained.

Track electricity cost per deployed feature, not per token. The METR study showed developers are 19% slower with AI despite thinking they're faster. If your agents use 10x more tokens but ship the same output, that's a Jevons problem.

Blackwell delivers 2-3x real-world efficiency gains. Rubin claims 10x more. Build your inference infrastructure to swap hardware generations quickly. Efficiency gains only help if you deploy them.

The SERA paper achieved 54.2% on SWE-Bench for $2,000 in training costs. For many use cases, a fine-tuned 7B model running on-premise uses 100x less energy than a frontier API call. Not every task needs Opus.

If your cloud provider's primary region is in Virginia, Texas, or Oregon, factor in rising electricity costs and potential capacity constraints. Diversify regions. Virginia's capacity auction prices spiked 833%.

When you roll out agentic AI, expect 5-10x the compute budget of chat-based AI. A single Claude Code session can consume 2M tokens. Multiply by your engineering team size. Then multiply by continuous integration.

Energy is becoming the binding constraint on AI scaling — not model quality, not talent, not data. Companies that optimize for energy efficiency will have a structural cost advantage by 2028.

Hardware engineers are doing extraordinary work. GPU silicon improved ~66x in compute per watt over 9 years (including the shift from FP16 to FP4 precision). But that's only part of the story — algorithmic gains (quantization, MoE, distillation) contributed another ~5x, and hyperscaler economies of scale plus competitive pricing pressure added ~3x more. Multiply them together: 66 × 5 × 3 ≈ 1,000x total reduction in the cost to run a token. NVIDIA's Blackwell and Rubin, Google's Ironwood TPU, and AMD's MI355X represent genuine breakthroughs.

But efficiency alone cannot solve the AI electricity crisis. The historical pattern is unambiguous: every time we make AI cheaper and faster, we use dramatically more of it. That 1,000x cost reduction unleashed 10,000x more demand. The grid feels the net, not the per-unit improvement.

The states where data centers are already concentrated — Virginia, Oregon, Iowa, North Dakota, Nebraska — are living previews of what the rest of the country will face by 2030. Virginia's grid didn't plan for 25% data center load. Neither did anyone else's.

"We are entering a rite of passage, both turbulent and inevitable, which will test who we are as a species."

The companies that thrive in this environment won't be the ones with the most GPUs. They'll be the ones who understand that intelligence is becoming abundant, but energy is becoming scarce — and plan accordingly.

† Note on the 66x figure: This compares V100 FP16 (~0.52 TFLOPS/W) to Rubin FP4 (~27.8 TFLOPS/W, pre-release). The comparison spans both silicon improvements and the evolution from FP16 to FP4 precision formats. Comparing within a single precision format (e.g., FP16 throughout) yields approximately 25-30x. Both framings are valid — real-world AI inference increasingly uses lower-precision formats, so the cross-precision comparison reflects actual deployment gains.

Published February 4, 2026 · Analysis by aictrl.dev

Charts generated from public datasets (EIA, EPRI/DOE, IEA, NVIDIA, Epoch AI). All data sources linked above.